Red Hat OpenShift Service on AWS (ROSA) clusters can be deployed in a few ways, public, private, and private with PrivateLink. Public and private clusters both have the OpenShift cluster accessible to the internet and define whether the application workloads running on OpenShift are private or not. However, there are customers with a requirement for both the OpenShift cluster and the application workloads to be private. The challenge this creates is that ROSA is a managed service and requires Red Hat SRE teams to be able monitor and manage the OpenShift cluster on behalf of the customer. If the cluster is completely private, how is this achieved?

In this post, we will address this challenge and touch on a subset of customer use cases and architectures.

Deploying ROSA private clusters with PrivateLink

ROSA private clusters with PrivateLink can be deployed via the ROSA CLI, however, they must be deployed into an existing VPC. We will need to provide three subnet IDs of private subnets within the existing VPC.

Deploying ROSA in an interactive mode:

or you can pass a single command:

rosa create cluster --cluster-name rosaprivatelink --multi-az --region us-west-2 --version 4.8.2 --enable-autoscaling --min-replicas 3 --max-replicas 3 --machine-cidr 10.1.0.0/16 --service-cidr 172.30.0.0/16 --pod-cidr 10.128.0.0/14 --host-prefix 23 --private-link —subnet-ids subnet-06999e53d2a2ec991,subnet-0a19ca9a50cfd238e,subnet-01760325eb996a87b

The ROSA install process will still require the EC2 instances to have egress access to the internet, either via an internet gateway or via routing to an egress point elsewhere. We will discuss this in greater detail later when we look at the VPCs.

AWS resources created during the ROSA PrivateLink provisioning process:

The ROSA OpenShift cluster itself deploys in the same manner as other deployment options. There will still be Master, Infrastructure, and Worker nodes deployed into private subnets, spanning three AWS Availability Zones.

Notable changes include:

- Addition of AWS PrivateLink service endpoints and PrivateLink connections from the ROSA VPC to Red Hat SRE teams.

- Reduction of the number of AWS load balancers.

- No internet facing AWS load balancers.

- OpenShift installer checks to not require internet gateway.

With ROSA private clusters with PrivateLink, Red Hat SRE teams no longer require the cluster to be internet facing to monitor or perform actions on behalf of the customer. Instead, a PrivateLink endpoint is created and linked to each of the private subnets in the VPC.

The PrivateLink connects to a VPC which Red Hat own and the SRE teams supporting and managing OpenShift. Should Red Hat SRE teams need to connect to the OpenShift web console or API, they will traverse the PrivateLink and connect to the AWS load balancers serving the OpenShift API and console. This reduces the number of AWS load balancers, as having an internal and internet facing load balancer is no longer a requirement. Now, there is only a Classic Load Balancer providing access to the application workloads running on OpenShift and a Network Load Balancer providing access to the OpenShift API and console endpoint.

Customer use cases and VPCs for ROSA PrivateLink:

Customers looking for a managed OpenShift with a requirement for the OpenShift cluster and application workloads not to be exposed to the internet. There is no requirement for centralized egress. In this use case, customers can deploy a traditional VPC, which has public and private subnets, NAT gateways, internet gateways, etc.

This can be deployed via the Launch VPC Wizard in the console, or using the Amazon Virtual Private Cloud (Amazon VPC) Quick Start if infrastructure as code AWS CloudFormation be preferred.

For customers that have strict networking and infrastructure policies, there may be the requirement for specific ingress and egress paths. This may result in VPCs needing to connect to other specific ingress and egress VPCs, or connect back to on premises. AWS provide a variety of connectivity options between VPCs and on-premises. The following whitepapers provide deeper insight into these:

We are going to focus on connecting ROSA VPCs to on premises or to egress VPCs using AWS Transit Gateway.

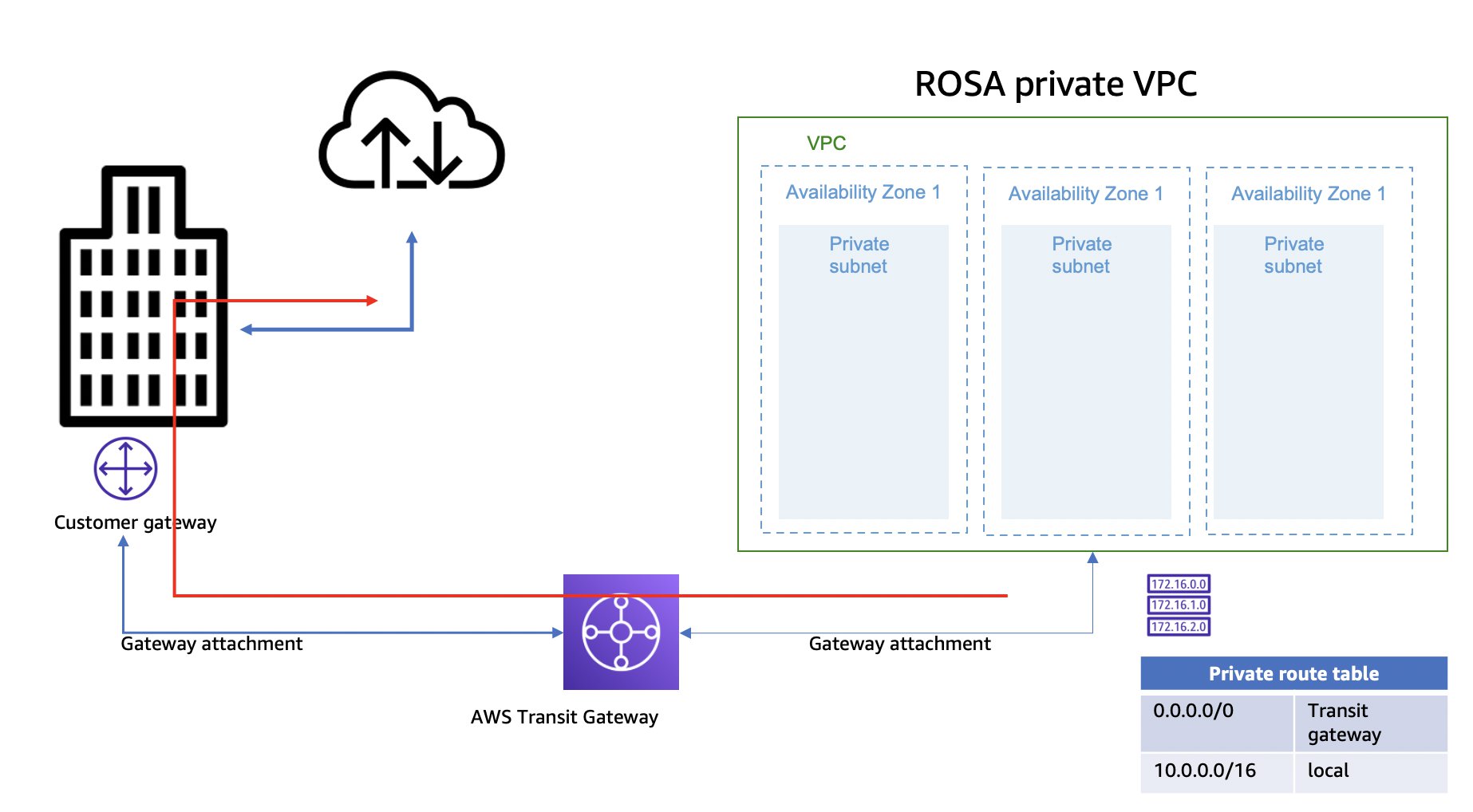

In this case, the VPC ROSA will be deployed into will only have private subnets and no internet gateway, instead, the VPC will use Transit Gateway to connect and route traffic to an egress point.

ROSA VPC to an AWS egress VPC to provide a central control breakout point:

ROSA VPC to on-premises:

In both preceding cases, ROSA will be deployed into the private subnets. The ROSA install process needs to reach resources on the internet. The VPC structure must enable routing to the internet, as well as cater for name resolution. Prior to deployment, you should perform a careful inspection of route tables to ensure that return routing is in place.

Implementing transit gateway for your ROSA VPC:

We’ll begin by:

- Creating a transit gateway.

- Creating attachments from the VPCs to the gateway.

- Updating VPC and transit gateway route tables.

The transit gateway will need an attachment to the ROSA VPC, Egress VPC, or to an endpoint in order to link back to on-premises. In this case, one attachment to the private subnets within the Egress VPC and another to the ROSA VPC. Each attachment will link to three subnets in the VPC.

Once the attachments have been created, route tables must be updated to ensure that traffic can traverse the VPCs to the internet and back.

- Update the route table within the ROSA VPC adding a default route via the transit gateway.

- Add a route to the VPC that goes back to the VPC CIDR of the ROSA VPC via the transit gateway.

- Update the route table for the transit gateway itself. The route entries for the VPCs will already be there. These are populated automatically when the attachments are created. We will need to add a default route via the attachment to the Egress VPC.

ROSA can then be deployed into the private subnets of the ROSA VPC.

Application owners and platform teams can self-serve through the use of AWS Control Tower and AWS Service Catalog. Common building blocks, such as a VPC, can be provided as an infrastructure as code template, then listed on AWS Service Catalog. Teams can deploy the ROSA VPC from the catalog and then deploy ROSA. To dive deeper into this, read our Self-service VPCs in AWS Control Tower using AWS Service Catalog blog.

Troubleshooting:

Here are few checks and validations to avoid issues:

- Validate that routing, specifically return routing, is in place. During provisioning:

- the OpenShift clusters will need to access resources on the internet

- the EC2 instances in the ROSA VPC will need to be able to route across the transit gateway to some point of egress

- traffic will need a return path

- Firewall configuration: It is common for customers to have a shared services infrastructure or security egress layer, this may be something like a Palo Alto. If this is not allowing HTTPS traffic out to the internet from the ROSA VPC, it will hinder provisioning.

- Network ACLs: Seeing as ROSA with PrivateLink is deployed into an existing VPC, ensure the network ACLs on the subnets allow the required traffic in and out of the ROSA VPC. The ROSA cluster provisioning will create the desired security groups.

- Desired state configuration: Should there be automation in place to revert changes to AWS infrastructure, it is recommended that teams interface with each other to make sure this does not hinder ROSA provisioning. In one case, the provisioned ROSA cluster generated a collection of Security Groups. The customer’s security and desired state automation had no considered this, and the tool stripped out the rules from the ROSA security groups preventing communication. Once identified, the security team configured rules for ROSA in the desired state tool.

The majority of issues encountered originate from not having all the correct stakeholders aware of each other. Ensure application platform owners, networking teams, security teams, and cloud centers of excellence are included.